PINN vs FEM II - Theoretical connection between Finite Elements and Single-Layer Perceptron

(Co-authored by Sean De Marco and Lloyd Fung)

The mathematics behind neural networks is often seen as a black box. That was definitely our impression when we first interacted with PINNs. Nonetheless, if we break down a Neural Network (NN) all the way to its most basic constituent unit, a Single-Layer Perceptron (SLP), we can start to uncover how it actually works. And I promise, after reading this, you’ll find a whole new appreciation of its similarity with the older, more established cousin that is the Finite Element Method (FEM).

(As a side note, the universal approximation theorem was proven also on a SLP. This post gave a good introduction to the theorem. If you’re familiar with the theorem, you’ll find that it is not too dissimilar from how FEM approximates PDE solutions.)

The example - a 1D problem

To illustrate the similarities between the two methods, we’ll start with the most generic and simplistic example – solving a one-dimensional PDE problem. (It should really be called an ODE since it’s 1D, but you get the idea.) Specifically, we will be trying to find a one-dimensional function $u(x)$ of space $x$ that fulfills a differential equation $\mathcal{L}(u(x),x)=0$$.

Single-Layer Perceptron

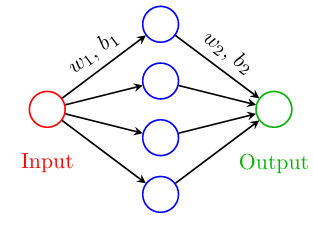

The Single-Layer Perceptron is the most simple NN one can write down. In the simple case of approximating a one-dimensional function $u(x)$, for example, where $x$ is the input, $\tilde{u}$ the approximated output, the approximating SLP can be written as

\[\tilde{u}(x) = \mathbf{W}^{(2)} \, \sigma^{(1)} \left( \mathbf{W}^{(1)} {x} + \mathbf{b}^{(1)} \right) + b^{(2)}\]where, for an SLP with $H$ hidden nodes,

\[\mathbf{W}^{(1)} \in \mathbb{R}^{H \times 1}, \quad x \in \mathbb{R}^{1 \times N}, \quad \mathbf{b}^{(1)} \in \mathbb{R}^{H \times 1}, \quad \mathbf{W}^{(2)} \in \mathbb{R}^{1 \times H}, \quad b^{(2)} \in \mathbb{R}^{1 \times 1}.\]The activation function $\sigma$ here can be anything from $\tanh$ to ReLU, applied elementwise. The universal approximation theorem tells us that, as long as $\sigma$ is “discriminatory”, with enough $H$, this unit can approximate any function.

Normally, to train a SLP like this, we treat all the weights $\mathbf{W}^{(\ast)}$ and biases $\mathbf{b}^{(\ast)}$ as trainable parameters to the optimisation that minimise the loss, in this case, the difference between the SLP evaluation and the data at sampled points $x_i$,

\[\sum_i \| \| u(x_i) - \tilde{u}(x_i) \| \|.\]However, to make the comparison with FEM more appearant, we are going to make one additional assumption: we are going to fix all parameters except the last layer weight $\mathbf{W}^{(2)}$ (or $n$ if you have $n$ layers), and put the last layer bias $b^{(2)}$ to zero. Putting $b^{(2)}=0$ is a fairly straight forward simplification, since it is simply shifting $\tilde{u}$ by a constant. However, fixing everything except $\mathbf{W}^{(2)}$ might come as a surprise - why would you want to do this?

Well, as it turns out, if only the last layer is trained, everything becomes linear! If the loss is simply an L2-Norm, then we are effective solving a linear least square problem, meaning we can use linear regression to get the optimal $\mathbf{W}^{(2)}$ that minimises the loss! This technique is known as Extreme Learning Machine (ELM). We don’t have time to do a deep dive into ELMs. All you need to know is that this trick enables regression-styled training.

Finite Element Methods (FEM)

Now, let’s take a look NN’s distant cousin - FEM. In Finite Elements, we typically approximate $u(x)$ by a linear sum of the weighted basis functions belonging to each element such that

\[\hat{u}(x) = \sum_{j=1}^{H}U_j \varphi_j\]where $\hat{u}(x)$ is the approximation to $u(x)$ at a given $x$, and $U_j$ and $\varphi_j$ the weights and trial basis functions respectively. In FEM, the trial basis function, $\varphi_j$ is an arbitrary choice, but they typically have compact support in a subdomain defined by the mesh. The compact support also enables sparsity in the matrix it formulates when applied to a PDE (more on that later). Here, we pick a simple and low-order basis - a piecewise-linear basis function:

\[\varphi_j(x) = \begin{cases} \frac{x - x_{j-1}}{x_j - x_{j-1}}, & x_{j-1} < x < x_j \\ \frac{x_{j+1} - x}{x_{j+1} - x_j}, & x_j < x < x_{j+1} \\ 0 & otherwise \end{cases}\]If we further assume that FEM is applied on a equispaced grid, i.e. $x_j - x_{j-1} = \Delta x$ for all $j=1…H$, then we can simplify the basis function as

\[\varphi_j(x) = \begin{cases} \frac{x - b_{j} + \Delta x }{\Delta x}, & b_j - \Delta x< x < b_j \\ \frac{b_{j} + \Delta x - x}{\Delta x}, & b_j < x < b_j + \Delta x \\ 0 & otherwise \end{cases}\]or

\[\varphi_j(x) =\varphi(x - b_j); \; \varphi(x) = \begin{cases} \frac{x + \Delta x}{\Delta x}, & - \Delta x< x < 0\\ \frac{\Delta x - x}{\Delta x}, & 0 < x < \Delta x \\ 0 & otherwise \end{cases}\]The Comparison

Notice the similiarity yet? Let’s restate SLP and FEM approximation to $u(x)$. The SLP approximation to $u(x)$, under the assumption $b^{(2)}=0$, can be written as

\[\tilde{u}(x) = \sum_{j=1}^{H} {W}_j^{(2)} \, \sigma^{(1)} \left( {W}_j^{(1)} {x} + {b}_j^{(1)} \right).\]Meanwhile, FEM approximate $u(x)$ as

\[\hat{u}(x) = \sum_{j=1}^{H} U_j \varphi(x-b_j)\]If we let

\begin{equation} \label{eq:1} {W}_j^{(2)} \mapsto U_j,

{W}_j^{(1)} \mapsto 1,

{b}_j^{(1)} \mapsto - b_j, \end{equation}

then the only difference between FEM and SLP comes down to only the choice of activation function $\sigma$ and trial function $\varphi$. However, remember that the trial function $\varphi$ is an arbitrary choice, so there is no reason why you cannot pick a discriminatory activation function as a trial function (although you may lose sparsity without compact support). In other words, we have demonstrated that a simple SLP can be cast into the FEM formulation, and that they can be made equivalent with the right assumptions!

What about the test functions? What’s that equivalent to in SLP?

While the above derivations has already shown the equivalence between SLP and FEM, the derivation for FEM is, as some of you can tell, not yet complete. Specifically, we have only shown the trial function, and not the test function yet.

In FEM, test functions are applied to the range space of the residue, i.e. the space where the model loss is computed. Therefore, we will need a mathematical object to represent the PDE. Let

\[\mathcal{L} u(x) = 0\]represents the PDE. For example, a Poisson equation will give $\mathcal{L} u(x) = d^2u/dx^2 - f(x)$, where $f(x)$ is the forcing function. The variation formulation of FEM recast the PDE into a minimisation for the losses

\[\sum_{i=1}^N \int v_i(x) \mathcal{L} \hat{u}(x) dx\]for each test function $v_i(x)$. Again, in the most general sense within FEM, $v_i(x)$ can be any arbitary functions, but typically we use compact support functions of the same form, shifted according to a structured grid. The most common choice is to use the same function as the trial function,

\[v_i(x) = \varphi(x-b_i),\]giving rise to a Galerkin method.

To recover the typical loss function used in SLP, however, will require a different substitution for $v_i(x)$. Specifically, in most ML applications, the loss is formulated in a pointwise manner, giving loss like the L2 pointwise loss

\[\sum_{i=1}^N || \mathcal{L} \tilde{u}(x_i) ||_2^2.\]This can be recreated from the variational form in FEM by substituting the dirac delta function into the trial function $v_i(x) = \delta(x-b_i)$. Hence, the loss used in typical ML application actually corresponds to a specific test function in FEM.

This insight also invites alternative loss formulation for PINN in general. Even in the case of a multi-layer perceptron or other more sophicated NN architecture, one can always replace the squared pointwise loss with

\[\sum_{i=1}^N \int v_i(x) \mathcal{L} \tilde{u}(x) dx,\]where $v_i(x)$ can be of any arbitrary choice. However, it may come at the price of more computation for evaluating the integral, especially when the first layer is not fixed and re-computation of the integral is required at every iteration.

So what happens if we optimise the first layer in SLP?

Now that we know the equivalence between a SLP and FEM at the specific case when the first layer is fixed according to \eqref{eq:1}, what happens in an actual training of SLP where the first layer is no longer fixed?

Let’s start by looking at what the biases are doing in an SLP in this 1D case. Assuming weight is still fixed, the main role of biases $\mathbf{b}^{(1)}$ are to shift the activation function along $\mathbf{x}$ for each node $j$. In the equivalent FEM world, shifting $\mathbf{b}^{(1)}$ would corresponds to shifting the mesh’s grid line. Meanwhile, the role of the weight $\mathbf{W}^{(1)}$ is to rescale $\mathbf{x}$ for each node $j$. The FEM equivalent here would be to size the region of domain where the trial function covers. Therefore, varying $\mathbf{W}^{(1)}$ and $\mathbf{b}^{(1)}$ in SLP is equivalent to changing the mesh in FEM. In other words, when the first layer is optimsed, the equivalent in FEM would be to have the mesh optimised. The SLP is adaptively meshed FEM in disguise!

With some caveat…

With this insight, one might have immediately jumped to the conclusion that SLP is better, since SLP is more adaptive. However, there are some subtle caveats with the comparison. In adaptive meshing in FEM, the components of $\mathbf{W}^{(1)}$ and $\mathbf{b}^{(1)}$ are not independent variables to be optimised. They are defined by the mesh such that each trial function can only be non-zero in a specific region of the domain, even when the mesh itself is being optimised. The SLP, in contrast, is not constrained in the optimisation. Furthermore, the activation function typically used in SLP does not have compact support – all nodes represent functions that cover the entire domain. This lack of locality has two critical consequences:

- Since the compact support in typical trial functions in FEM is the underlying reason that gives rise to sparsity in the matrix FEM formulates in solving the PDE, the lack of compact support means solving the PDE with an SLP through ELM is going to be much more computationally expensive. A FEM solution to the Poisson equation, for example, will have a computational cost of $\mathcal{O}(H)$. A SLP trained by ELM, by comparison, will have a computational cost of $\mathcal{O}(H^3)$.

- With that said, the effective adaptive meshing of SLP means less mesh is needed to resolve a solution of the same complexity. Moreover, there is an argument to be made that with more layers, information in a solution can be further compressed, especially in higher-dimensional problems. It is for this reason that the overall computational cost of training a PINN is not trivial and may very well be problem-dependent.

- Since activation functions in SLP are typically globally supported, it also means information travels through the entire domain during training. In contrast, FEM obeys the locality of information with its locally supported activation functions. In other words, FEM naturally obeys the information structure of the PDE, but SLP disregards that. This is the Achilles’ heel of PINN – its flexibility and expressivity come at the cost of potential inconsistency with how information flows in physical systems, as described by the PDE.

- Compact support of the trial functions also improves the conditioning of resulting matrix inversion. Since FEM’s trial functions are constrained by the mesh, the basis are guaranteed to be orthogonal in the function space. In contrast, in an SLP, the hidden layer’s node functions can become very collinear, worsening the problem’s conditioning. My friend Ben Moseley has done some nice work that highlights the importance of balancing the locality of information and global support in PINN through explicit domain separation.

- The violation of how information travels in physical systems is sometime viewed as breaking “causality”. There has been some work trying to addres the problem from this perspective.

Summary

In this post, we have established the theoretical connection between an SLP and FEM and shown how we can cast an SLP as an FEM. The “training” of such an SLP by ELM is also equivalent to solving a FEM. With this theoretical comparison established, the pros and cons immediately jump out. FEM is faster because of its compact support. It also makes sure the hidden nodes are not similar to each other, ensuring the matrix inverted is well conditioned. The use of sigmoid activation functions in a vanilla SLP, in contrast, does not have compact support. However, with a flexible first layer, it trades that convergence guarantee and well-conditioning for more flexibility. As a result, an SLP is typically more expressive than an FEM. SLP can represent the same solution with less $H$ than FEM, given SLP’s $\mathbf{b}^{(1)}$ and $\mathbf{W}^{(1)}$ can adapt to resolve location with more complexity. This is a main argument for PINN - it can represent complex solutions with less parameters due to this flexibility. We will see that adaptibility in action in the next post.

Lastly, we should also highlight one important advantage we have yet to demonstrate – NN works particularly well in high-dimensional systems because of its ability to compress data and overcome the “curse of dimensionality”. This is largely to do with the depth of NN, which FEM cannot replicate. However, even in an SLP, one can already see its advantage due to the flexibility with its first layer. In higher-dimensional systems (e.g. $u(x,t)$), the first layer weight plays another important role – it gives the orientation of the activation function in the higher-dimensional space. This has major implications, both in how SLPs are able to represent solutions and the direction of information travel and locality of information in the solution. We will explore more in-depth on this in a future blog post.

Next time

In the next blog, we are going to show some examples, where we will show that, despite being mathematically equivalent, the two methods can yield very different results due to the different way they were trained. We are also going to touch upon an important feature that plagued PINN’s ability to represent complex solutions of PDEs, called spectral bias. There will also be more in-depth discussion on which method gives better performance from both a theoretical perspective and from experimentation.

See you in the next blog post!